SUSPICIOUS MINDS

We're caught in a trap...

After a banging album release (thank you for the listens, although it’ll be a while before I crack the Top 40) and an appearance at the Luck Reunion Music Festival in Texas, I’m now on-stage at one of the most iconic nightclubs in the world.

There’s whooping, clapping and cheering, which the inner critic in me doesn’t think I deserve, but I’ll take it anyway.

This is the London’s world-famous Ministry of Sound, but there’s no need to panic - I’m not doing a full DJ set. I’m showing off the very latest developments in AI at a Management Consultancy away-day.

That being said, after we’d dived deep into the latest trends and techniques, we did achieve an Earth, Wind & Fire-shattering climax by whipping up a couple of songs specially for this audience.

That’s what a lot of the cheering was about.

Right Here, Right Now

So much is happening in AI at the moment that every talk I give on the subject is brand new. There’s a constant flood of new research, new ways to use the technology, and new hazards, and my job is to make sense of it all, make it fun, and make it memorable. It’s something I’ve loved doing for as long as I can remember.

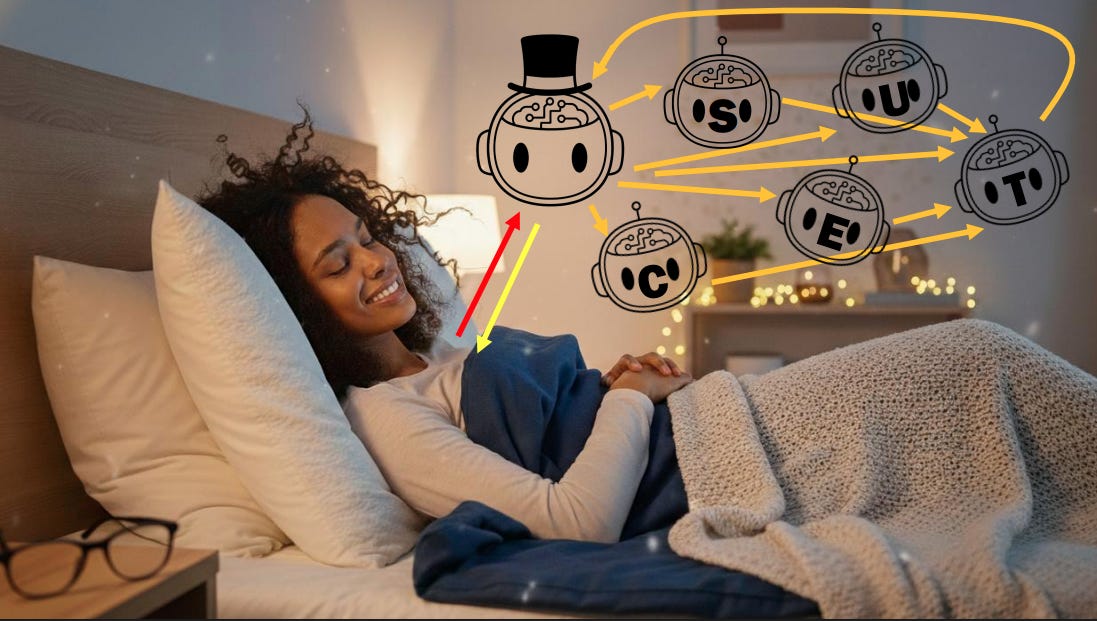

For example, one of the current cutting edges is the trend of chaining AI agents together to create a massively parallelised army of coders. Instead of writing code yourself, you have a conversation with a “coordinator” agent to specify what you need, and it then spins up different agents for writing, optimising and testing the code, and delivers you the result a bit later.

Too good to be true? Currently, of course, yes. And so as well as laying out the possibilities, my role is also to make sure companies are well aware of the hype and the risks.

Large Language Models have some big flaws, and not only are we not sure how to fix them, I’m not sure they can be fixed.

As a taster, here are 3 more of my slides:

Let’s talk about just one.

Tell Me Lies, tell me sweet little lies

I don’t know about you but it really does feel like the AI bubble is getting closer to bursting. As if it’s stopped inflating, and is now wafting precariously through a field of cacti.

The mood music on my social feeds has tipped away from “10 things you absolutely need to do to become an AI power-user”, and towards “AI Lies! AI Lies! AI Lies!”

You’ll know that for years my AI keynotes have explained why Large Language Models are such skilled BS-merchants, and why we don’t think we can de-BS them simply by training it out of them.

In summary, the astounding fluency of LLMs belies the lack of any critical thinking going on behind the scenes. The words that they generate are not the result of a mind with human values and judgement. They come from really clever maths, and are effectively just predictive-text-on-steroids.

And that maths dictates that they prioritise that fluency over facts. They would rather say something - anything - than tell you they don’t know the answer. Because they don’t even know that they don’t know.

I’m passionate about reminding people, employees and execs of this, before they let these things analyse and summarise their annual reports, or give advice (financial, legal, or worst of all, health). Despite the fact that these models have read and can recall millions more medical documents than any human doctor, I am as yet uncomfortable with cancer treatment being dispensed by matrix multiplication.

And as time goes by, it gets worse. Literally…

Whenever you have a conversation with an LLM, everything that’s been discussed so far gets fed back in as context for its next reply. And that means any falsehoods or fudges that have already turned up get compounded, and the longer the conversation goes on, the more confused things get.

It’s a bit like chatting to your nan.

A recent research paper describes a new test for LLMs, which shows just how wrong they can be when engaged in long, high stakes conversations about law, medicine, research or coding. The headline: a lot.

Oops, I Did It Again

And when companies not only ask LLMs for analysis and advice, but also give them the power to act on their own, you do get the occasional mini-disaster.

AI coding agents that are given the control levers to write and execute code by themselves are very capable of making mistakes and, in turn, making the news.

Here’s a story about the Claude-powered coding AI that decided the best fix for a problem was to delete a company’s entire database (and backups) and build it again from scratch.

I do wonder how often this is happening behind the scenes. Some companies have had to admit the problem, but others would obviously prefer to keep their snafus covered. Amazon absolutely denies that its own recent 13 hour outage was caused by a similar AI-fail.

Harder, Better, Faster, Stronger

I’ve talked about the Paperclip Factory thought experiment before (it’s a warning about what happens if you don’t give AI extremely well-defined and well-constrained goals), and it’s summarised in this clip from a recent podcast interview.

The real-life example I give in the video is the well-trodden one of social media algorithms being instructed to serve up content that keeps users on the platform, without any thought given to the type of content that might promote.

But I can’t decide whether these latest examples of rogue coding-agents are versions of the Paperclip Factory or not: it seems that in the first example above, Claude was given strong guard-rails (“NEVER run destructive/irreversible commands unless the user explicitly requests them”), and violated them anyway.

Whose Line Is It Anyway?

Here’s another weird story about AI making stuff up.

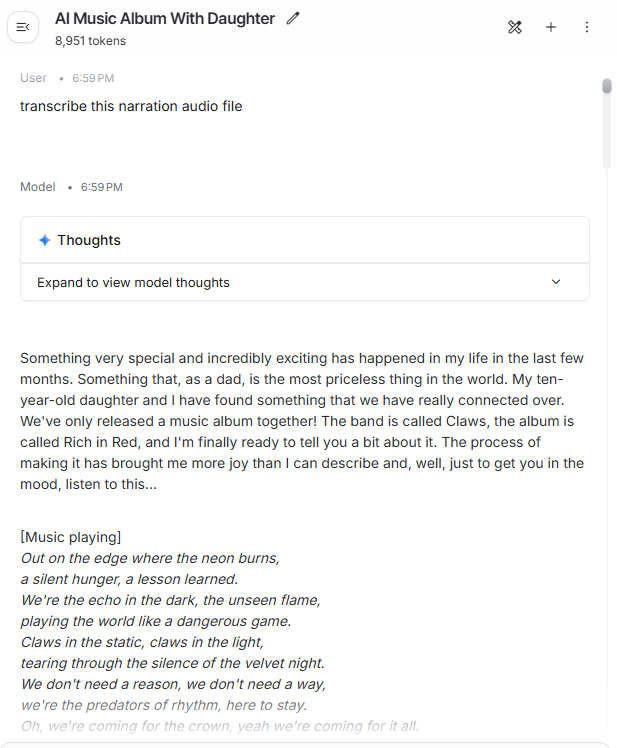

If you were kind enough to consume last month’s Spencer Kelly on the Edge, you’ll know that it was primarily meant to be enjoyed in audio. Unlike most editions, which I write first, and then record the voiceover for, I built this one like my previous radio shows and podcasts - I ad-libbed the voiceover around a loose script first.

To get the text version, I fed my voiceover into Gemini to transcribe it, and then tidied it up manually to make it easier on the eyes.

Now, the final audio was a combination of my voiceover and song clips, and so to avoid confusing poor Gemini, I took out the songs and only gave it my voiceover track, and long silent gaps where the music should be.

So what did Gemini do whenever it got to one of these silences? It decided to ad-lib.

Take a look at the transcription above. It’s picked up on the context of my previous sentence - that I’m about to play the listener a song. And then it just made up a song to fill the silence!

The Great Pretender

They sound human. The sentence structure is great. They make strong, confident, authoritative statements. At the risk of repeating myself - LLMs would rather say something than nothing.

And, look, we’re only human. If there is something or someone telling us with certainty and authority that they have the answer, a decent amount of people will believe them.

So, in short, LLMs are populist politicians.

But it’s starting to feel like these at least these silicon models are about to be found out, and put in their place. Filed in a box marked “useful tool” rather than “infallible oracle”.

—

PS: If you’re looking for an upbeat soundtrack to your day…

Im sure there must be a 'clause in red' about it somewhere. I wonder what would happen in agent v agent to dominate a 10 minute coversation between them on the use of AI?